Impact of Artificial Intelligence on Airline Revenue Management

Artificial Intelligence (AI) is one of the most transformative technologies of our time, reshaping decision-making across all industries.

For airline Revenue Management (RM), this raises a pressing question: what will AI’s impact be? Could decades of proven RM science suddenly become obsolete, or will AI’s role be more complementary – augmenting RM Systems (RMS) rather than replacing them?

For many RM professionals, the answer to these questions is both exciting and unsettling.

RM Science has been resilient over the past four decades

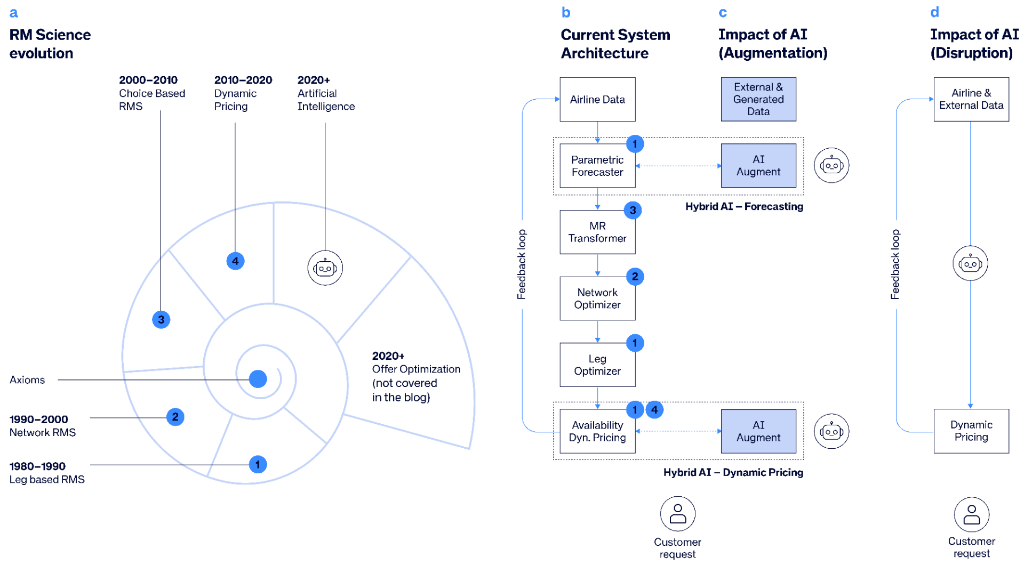

I was recently honored as an AGIFORS Fellow at the AGIFORS 2025 Symposium in London[1], where I delivered a talk on “The Simplicity and Beauty of Revenue Management.” The central theme of that talk was that RM science has proven remarkably resilient for more than four decades. Like a growing shell, see figure (a), the RMS has evolved over the years by adding components of sophistication: leg optimization, network optimization, choice modeling, dynamic pricing, and offer management, while its core scientific foundation (the axioms) remains unchanged. In essence, the axioms define the economic trade-off between accepting a booking now versus saving the seat for potential future demand.

Caption:

a. RMS evolution can be visualized as a growing shell, with new chambers representing added components of sophistication over time. The core axioms remain intact, and each new component builds on the previous ones rather than replacing them.

b. This evolution maps directly to the RMS architecture: for example, the network RMS of the 1990s included components 1 and 2 only, while today’s modern RMS integrates all components 1–4.

c. AI is already part of this evolution, in particular with hybrid forecasting and hybrid DP that combines classical parametric models with AI models.

d. Impact of AI disruption, replacing classical RMS with “AI-native” solution end-to-end.

How can AI and Gen AI be applied to improve RM?

Modern AI techniques, including Deep Learning (DL) behind today’s large language models (LLMs), have shown extraordinary ability to learn complex patterns from massive datasets and deliver high predictive accuracy across many domains. Generative AI (GenAI) extends this capability by producing new content such as text, code, images, and structured plans from natural‑language prompts using LLMs.

We focus on three areas where we see the greatest promise for these technologies within the structure of RMS shown above:

- Hybrid-AI Forecasting

- Hybrid AI- Air and Ancillary Pricing Optimization

- Supporting a Revenue Management Analyst’s workflow

1. Hybrid-AI Forecasting

In a paper published last year in the Journal of Revenue and Pricing Management [2], we introduced a method for hybrid-AI forecasting that combines the strengths of classical parametric forecasting with AI models, see figure (c). The parametric forecast model provides an interpretable baseline, while the AI model learns the residual pattern the baseline misses, resulting in improved forecast accuracy.

Hybridization also opens the door to solving long-standing challenges in forecasting, for instance: demand sponsorship. For markets with little or no historical data, airlines face a cold‑start problem. In our research [3], we applied GenAI by prompting a LLM for traveler‑oriented descriptions of each destination, then converting these descriptions into embeddings (vector representations that place similar destinations close together). This embedding space then supports nearest‑neighbor retrieval to identify suitable sponsor markets.

As a benchmark study, we evaluated a continuum of demand forecast models: from a pure parametric baseline, through a sequence of hybrid variants with progressively larger AI responsibilities, to a pure AI model. All AI components were built on state‑of‑the‑art DL transformer architectures, and the best forecaster was selected solely on the basis of predictive accuracy. Across realistic airline datasets, the hybrid variants consistently achieved the highest accuracy, outperforming both the parametric baseline and the pure AI model.

The pure AI models underperformed because they lack built‑in business knowledge. Without these constraints, the models may generate nonsensical outcomes such as inverted price–demand relationships. In addition, airline data does not provide sufficient volume or price variation needed to train very large models effectively. Even if a stronger AI‑only model were engineered, it would still need to relearn the structural relationships already encoded in the parametric model. By contrast, the hybrid design incorporates this structure by construction, allowing targeted and controllable AI adjustments anchored in a stable reference.

2. Hybrid AI- Air and Ancillary Pricing Optimization

The hybrid design extends naturally to dynamic pricing (DP) for both flights and ancillaries. To understand how AI contributes in this context, let's look at the objective of DP. In each shopping session, given the shopping context, DP selects the pricepDPthat maximizes the expected contribution, defined as the margin (price minus bid price), multiplied by the purchase probability at that price for that context. Expressed mathematically, this objective is:

pDP = argmaxp (p - BP)*ProbDP(p|context)

where BP denotes the bid price from the RMS andProbDP(p|context) the DP purchase probability at price p given the shopping context.

Using the same objective, with the purchase probability ProbRMS(p), obtained from RMS hybrid forecaster, we compute a continuous reference price pRMS. This is typically mapped to a class-based availability for traditional distribution, although this is not a strict requirement. Unlike pDP, the reference price from RMS is not contextual. The DP price can be expressed mathematically as follows:

pDP= pRMS + Δp(context)

which aligns with the hybrid approach - an interpretable baseline pRMSplus a learned correction Δp [4].

AI models can directly compute the price correction using a rich set of features including passenger preferences, trip characteristics, and competitor positions; or actively perform price experimentation when historic data volume or price variation is limited.

3. Supporting a Revenue Management analyst’s workflow

The introduction of agentic AI capabilities will change how Revenue Management analysts interact with the system. Instead of a static user interface, and complex manual rules set-up, agentic AI offers a more intuitive, natural‑language interface that supports alert prioritization, insights generation, intervention recommendation and automation. This architecture integrates both airline data and external data sources (such as event data or competitive data). We are currently developing such agentic AI features in our products.

Could AI disrupt RMS and Offer Optimization end to end?

Finally, given the transformative nature of AI, we might imagine a fully “AI-native” offer optimization system: a system that would take airline data as well as external data as input and directly produce optimal offers and prices as output, skipping over the core scientific foundation of RM entirely. Could this approach work?

In theory, yes. In practice, no!

Reinforcement Learning (RL), a subfield of AI, solves the same type of problem as classical RMS: sequential decisions under capacity constraints to maximize expected revenue. While classical RMS ismodel‑based, relying on a forecaster to estimate customer arrivals and purchase probabilities, RL (see figure (d)) is model-free, learning by experimenting with prices and observing the resulting choices.

RL has succeeded in areas with abundant experimentation data and instant reward signals such as energy control in data centers, adaptive traffic signals, and real‑time ad bidding digital marketplaces. However, airline RM is different. Historical data is sparse and the airline’s price variation is relatively limited. Further, the business environment is highly non‑stationary: demand shifts with day‑of‑week, seasonality, events, schedule and fleet and capacity changes.

Our research shows that even in the simplest scenario of a single flight with daily departures in a perfectly stationary environment, the RL still requires decades of departure data and extensive price experimentation to achieve revenue performance comparable to classical RMS. This makes a pure RL approach impractical for real-world airline network.

That said, RL offers intriguing insights. Being model-free, it serves as a test bed to challenge long-held assumptions about optimal pricing strategies. In simulations where an RL agent competed against a classical RMS, we observed that the RL agent discovered unconventional strategies, such as aggressive last-minute discounting, that are not often observed in the airline industry but are common in other industries.

The key takeaway is clear: AI will not replace RM science, but it can spark innovation.

Main takeaway

AI is reshaping industries everywhere and brings exciting new opportunities. For RM specifically, our conclusion is that AI will complement rather than replace RM science. The most promising direction is hybridization, where interpretable parametric models remain central and are enhanced with AI‑driven corrections. In parallel, agentic AI can improve workflows and efficiency by giving RM analysts a more intuitive, conversational interface.

References

Nanty, S., Fiig, T., Zannier, L., & Defoin-Platel, M. (2025). Enhanced demand forecasting by combining analytical models and machine learning models: S. Nanty et al.Journal of Revenue and Pricing Management.

Nanty, S., Fiig T., Application of Generative AI for airline Demand Forecasting, Agifors RM Study Group 2024.

Fiig, T., Wittman, M., Trescases, C. Will RMS survive the Offer/Order transformation? Agifors RM Study Group 2025.

Bondoux, N., Nguyen, A. Q., Fiig, T., & Acuna-Agost, R. (2020). Reinforcement learning applied to airline revenue management. Journal of Revenue and Pricing Management.

Terminology

Revenue Management System (RMS): modules 1–3, produces bid prices (BP) that the execution engine translates into booking‑class availability; prices are constructed by mapping the availabilities to filed fares.

Leg based RMS: Flight network is decomposed into independent legs that are optimized separately.

Network RMS: Flight network is optimized considering that customer itinerary connects multiple legs.

Choice‑based RMS: Extension to simplified fare structures (fenceless, fare families, branded fares) applying the Marginal Revenue (MR) Transformation.

Dynamic Pricing (DP) : an extension of RMS. DP computes a continuous price that maximizes the contribution of a sale, considering both contextual information about the travel context and competitor alternatives.

Deep Learning (DL): a subfield of AI that learns patterns and representations directly from data using multi‑layer neural networks. In this blog we focus applications for predictions (forecasting) and representation learning (sponsorship).

Reinforcement Learning (RL): a subfield of AI where an agent learns by trial and error to choose actions over time that maximize long‑term reward by updating its strategy from feedback and balancing exploration with exploitation.

TO TOP